DIY Price Monitoring: Is It Really Worth It?

A technical breakdown of DIY price monitoring with n8n, Apify, or custom code. Real costs, maintenance traps, and when to build vs buy SaaS.

The Appeal of DIY Price Monitoring

DIY price monitoring is worth it only if you're a technical retailer tracking fewer than 50 products. Beyond that, the total cost — $1,500–$8,600/year including maintenance time — exceeds what a dedicated SaaS tool costs ($1,188/year), and you lose product matching, historical data, and product discovery.

But building your own is genuinely satisfying. You pick your stack, control every detail, own the data, and avoid paying $99/mo to a SaaS vendor for something you could probably build in a weekend.

We get it. We're builders too. The BuyWisely platform that powers SellWisely started as a scraping project. We've spent three years maintaining scrapers across 10,000+ retailers, and we know firsthand what it takes.

That experience is exactly why we want to give you an honest accounting of what DIY price monitoring actually costs — not the weekend project version, but the "still running 12 months later" version.

The Real Cost Breakdown

Say you run a mid-size online store with 100 products and want to track prices across 5 competitors. This is what each approach actually costs.

Option 1: n8n + Bright Data

n8n is the strongest option for DIY price monitoring. Open source, self-hostable, with 10+ pre-built price monitoring templates. The community at 500K users is active and helpful.

| Component | Monthly Cost |

|---|---|

| n8n Cloud Pro (10K executions) | $65 |

| Bright Data proxies (residential) | $50–200 |

| Google Sheets (storage) | Free |

| Total platform cost | $115–265/mo |

But platform cost is only half the story.

| Time Investment | Hours |

|---|---|

| Initial setup (customize template, configure URLs, connect integrations) | 5–10 |

| Monthly maintenance (fix broken scrapers, update selectors) | 1–3 |

| Annual maintenance total | 12–36 |

At $50/hour opportunity cost, that's $600–1,800/year in maintenance time on top of $1,380–3,180/year in platform fees. Total first year: $2,230–5,480. Year two onwards: $1,980–4,980.

Option 2: Apify Actors

Apify gives you 10,000+ pre-built scrapers ("Actors") and handles proxies and infrastructure. More turnkey than n8n for scraping specifically, but credit-based pricing means costs scale with usage.

| Component | Monthly Cost |

|---|---|

| Apify Personal plan | $49 |

| Credit overages (100 products daily) | $15–30 |

| Total platform cost | $64–79/mo |

Setup time is similar to n8n (5–10 hours), maintenance is lower because Apify manages some infrastructure, but Actors still break when target websites update their layouts. "Actors break when platforms update their UI" is a common complaint across Apify's 378 Trustpilot reviews. Budget 1–2 hours/month for maintenance.

Option 3: Custom Python/Node.js

Full control. You write the scraper, run it on your server, store data in your database.

| Component | Monthly Cost |

|---|---|

| VPS (DigitalOcean/Hetzner) | $5–50 |

| Proxies (Bright Data/Oxylabs) | $50–200 |

| Code / libraries | Free |

| Total platform cost | $55–250/mo |

| Time Investment | Hours |

|---|---|

| Initial build | 10–40 |

| Monthly maintenance (selectors, anti-bot, dependency updates) | 3–6 |

| Annual maintenance total | 36–72 |

At $50/hour, that's $1,800–3,600/year in maintenance time. First year total with setup: $2,660–7,600. This is the most expensive option once you account for developer time.

Option 4: Browse AI

Browse AI markets itself as no-code scraping. Point, click, extract. Their Chrome extension has 4 million installs for a reason — the initial experience is genuinely good.

| Component | Monthly Cost |

|---|---|

| Browse AI Professional | $99–123 |

| Total platform cost | $99–123/mo |

6,000 credits per month on the Professional plan means roughly 600 products monitored daily if each product costs 10 credits. Scale beyond that and you're buying more credits or upgrading to custom pricing.

Browse AI claims 99%+ uptime with AI-powered adaptation when websites change. Reviews paint a more mixed picture: it works well for simple sites but struggles with heavily protected or complex e-commerce pages.

The All-In Comparison

| Approach | Platform Cost/Year | Time Cost/Year | Total Year 1 | Total Year 2+ |

|---|---|---|---|---|

| n8n + Bright Data | $1,380–3,180 | $850–2,300 | $2,230–5,480 | $1,980–4,980 |

| Apify | $768–948 | $600–1,300 | $1,618–2,498 | $1,368–2,248 |

| Custom code | $660–3,000 | $2,300–5,600 | $2,960–8,600 | $2,460–6,600 |

| Browse AI | $1,188–1,476 | $300–600 | $1,488–2,076 | $1,488–2,076 |

| SellWisely Pro | $1,188 | $0 | $1,188 | $1,188 |

The platform cost advantage of DIY shrinks or disappears entirely once you factor in time. And if you're a growing retailer whose time is worth more than $50/hour, the math tilts even further toward SaaS.

The True Cost of DIY DIY price monitoring costs $1,500–$8,600 per year when you account for development, hosting, and maintenance time.

The Maintenance Trap

The part nobody warns you about: the build is the easy part.

Websites change. Constantly. A retailer redesigns their product page layout, switches front-end frameworks, adds bot detection, or restructures their URLs. When that happens, your scraper breaks silently. You don't find out until you check your data and notice gaps, or worse — you make a pricing decision based on stale data you didn't realize was stale.

Specific examples from the wild:

Anti-bot detection escalation. Cloudflare, PerimeterX, and Akamai are getting more aggressive every year. A scraper that worked fine six months ago starts getting blocked. You need to rotate proxies, manage browser fingerprints, add delays. Each retailer is a small arms race.

Layout changes break selectors. A retailer moves their price from span.price to div.product-price > span.amount. Your CSS selector returns nothing. If you're lucky, you get an error. If you're unlucky, your scraper returns null and silently inserts blank rows into your Google Sheet. You notice three weeks later.

Dynamic rendering. More sites are using client-side JavaScript to load pricing. Your HTTP request gets a page shell with no data. Now you need headless browser rendering (Puppeteer, Playwright), which is slower, more resource-intensive, and more fragile.

Rate limiting and IP bans. Hit a site too frequently and you get blocked. Too many concurrent requests from the same IP range triggers alerts. You start managing proxy pools, request timing, and retry logic — infrastructure concerns that have nothing to do with price monitoring.

After reviewing hundreds of community threads on Reddit (r/ecommerce, r/webdev, r/selfhosted) and the n8n Discord, one pattern stands out: DIY builders spend about half their maintenance time on the actual price monitoring logic and the other half fighting infrastructure issues.

"I spent 8 hours last weekend fixing my workflow" is the canonical complaint. It shows up in n8n community forums, Apify reviews, and countless Reddit threads. The first time it's annoying. The third time, you start questioning whether the control was worth it.

The Scale Ceiling

DIY price monitoring has a well-defined scaling limit. The pattern is consistent across every approach we've analyzed:

1–20 products: Comfortable. Setup investment pays off. Maintenance is minimal — maybe 30 minutes a month.

20–50 products: Friction starts. You're managing more URLs, more selectors, more failure modes. Maintenance creeps up to 1–2 hours per month. Still manageable.

50–100 products: The breaking point for most DIY setups. Debugging takes longer because failures compound — one broken selector affects 15 products, and you're not sure which data points are stale. n8n execution limits start mattering (10K/month on Pro). Maintenance hits 3–5 hours per month.

The 50-Product Ceiling 50 products is the practical ceiling for DIY monitoring. Beyond that, maintenance burden exceeds the value gained.

100+ products: Not realistic without a dedicated developer. The maintenance burden is essentially a part-time job. Proxy costs scale linearly. You're spending more time on infrastructure than on making pricing decisions.

This ceiling exists because maintenance burden grows non-linearly with product count. Each additional product adds a small maintenance probability — but at 100 products, the probability that something breaks every week approaches 100%.

Weekly Breakage At 100+ products, there's effectively 100% probability something breaks every week — scrapers fail, sites change, data corrupts.

What DIY Can't Do

Beyond the cost and maintenance issues, DIY approaches have structural gaps that no amount of engineering can easily close:

Product matching. "iPhone 15 Pro 256GB Space Black" on one site is "Apple iPhone 15 Pro (256GB) - Space Black" on another and "iPhone15Pro 256gb Black" on a third. Matching these requires fuzzy matching algorithms (Levenshtein distance, tokenization, brand normalization) that are non-trivial to build and maintain. Most DIY setups skip this entirely — you manually map products in a spreadsheet, which defeats the purpose of automation.

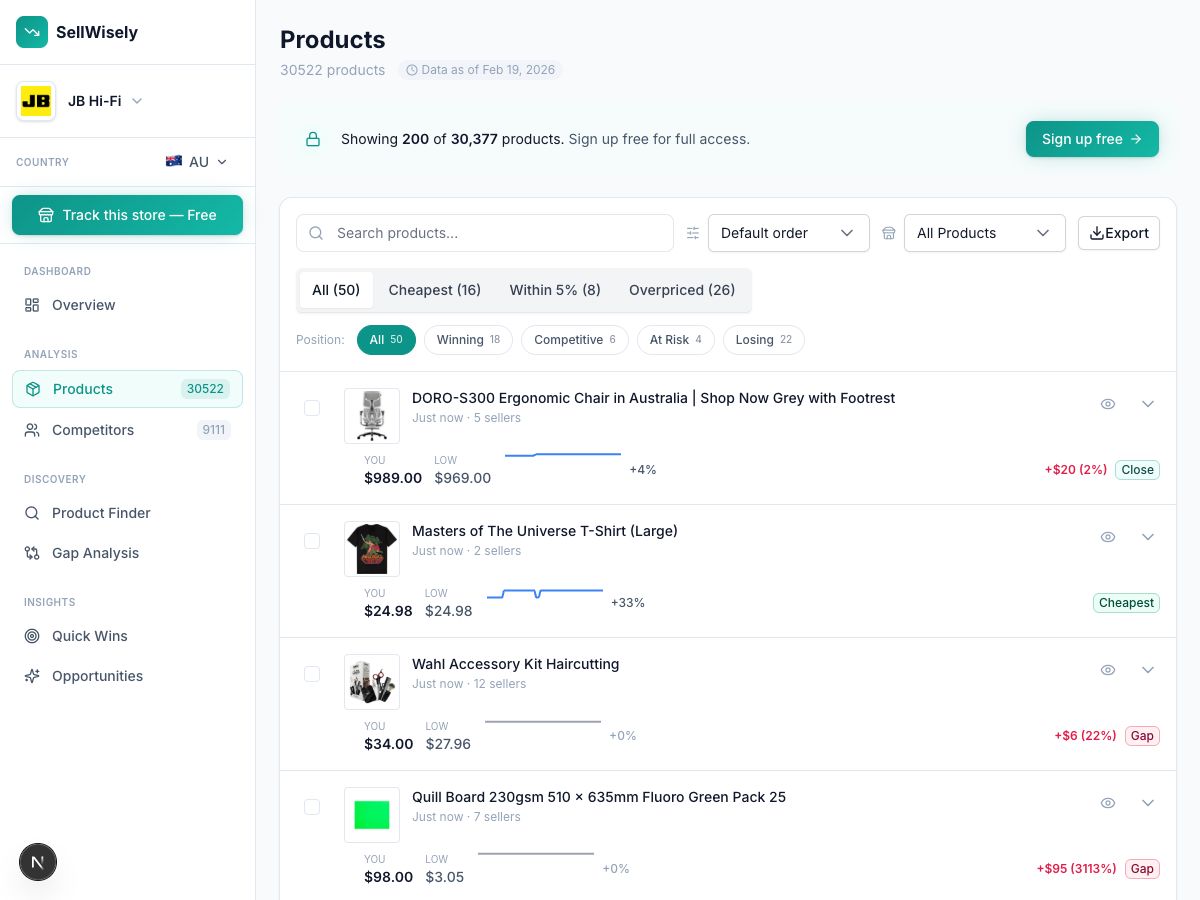

Pre-collected historical data. Your DIY system starts collecting data the day you build it. Want to know how competitors priced products last holiday season? Last year? Two years ago? That data doesn't exist. SaaS platforms with pre-collected data (like SellWisely, which has 3 years of historical pricing) provide this instantly.

Product discovery. DIY tracks what you tell it to track. It can't tell you "your competitor just started selling 15 products you don't carry." That kind of intelligence requires a database of millions of products across thousands of retailers — exactly the kind of thing that's expensive and slow to build from scratch.

When DIY Still Makes Sense

Being honest: there are situations where building your own is the right call.

You're learning. Building a scraper teaches you about HTTP, HTML parsing, data pipelines, and automation. If you're a developer early in your career, the educational value is real. Build it, learn from it, then decide whether to maintain it or switch to a service.

You have a tiny catalog (under 20 products). With fewer than 20 products, the maintenance burden is genuinely small. You can probably check your scraper once a month and fix issues in under an hour. At this scale, paying $99/mo for a SaaS tool might not make financial sense — especially if you're a solopreneur watching every dollar.

You need to scrape something unusual. If you're monitoring prices on a platform that no SaaS tool covers — say, a niche B2B marketplace, or a local classified site — DIY might be your only option. SellWisely covers 10,000+ retailers, but it doesn't cover everything.

You're already deep in the n8n/Make ecosystem. If you run 30 workflows in n8n for inventory management, order processing, and marketing automation, adding a price monitoring workflow is incremental. The marginal cost (both money and time) of one more workflow is low because you've already paid the learning curve.

You genuinely enjoy it. Some people like maintaining infrastructure. If fixing scrapers on a Saturday afternoon is genuinely fun for you (no judgment — we've been there), the maintenance "cost" is more like a hobby.

The Integration Path: Best of Both Worlds

If you're a technical user who values control but is tired of maintaining scrapers, consider this: keep your automation workflows, replace the scraping layer.

Use a data API (SellWisely's or others) as the data source, and pipe that data into your existing n8n, Make, or Zapier workflows. You keep your custom alerting logic, your Google Sheets dashboards, your Slack notifications — everything stays the same. You just stop maintaining scrapers.

This is the coexistence model: SaaS handles the data collection (the expensive, maintenance-heavy part), and you handle the automation (the part where your custom logic actually adds value).

Your n8n workflow goes from:

Schedule → Bright Data scrape → Parse HTML → Compare prices → Alert

To:

Schedule → SellWisely API → Compare prices → Alert

Same result. One fewer thing to maintain.

Making the Decision

Build a simple decision tree:

How many products do you need to track?

- Under 20: DIY is fine. Save the money.

- 20–50: DIY works but budget for growing maintenance.

- 50+: The maintenance burden probably exceeds SaaS cost. Use a service.

How technical is the person who'll maintain it?

- Developer comfortable with scraping: DIY is viable.

- Non-technical team: Use a service. Full stop.

What's your time worth?

- Calculate: (monthly maintenance hours × hourly rate) + platform cost. Compare to SaaS cost. Include setup time amortized over 12 months.

Do you need product matching and historical data?

- If yes: SaaS wins. Building this from scratch takes months, not weekends.

If you're coming from spreadsheets, first check whether you've actually outgrown them — a spreadsheet tracking 20 products is fine. For a broader comparison of all the approaches — manual, DIY, traditional SaaS, and pre-collected platforms — see our complete guide to tracking competitor prices. Or check out our comparison of the best price monitoring tools to find the right fit.

Already maintaining scrapers? Try swapping the scraping layer for SellWisely's API — keep your n8n workflows, drop the proxy bills and selector debugging. Free tier covers 50 products.

Ready to track your competitors' prices?

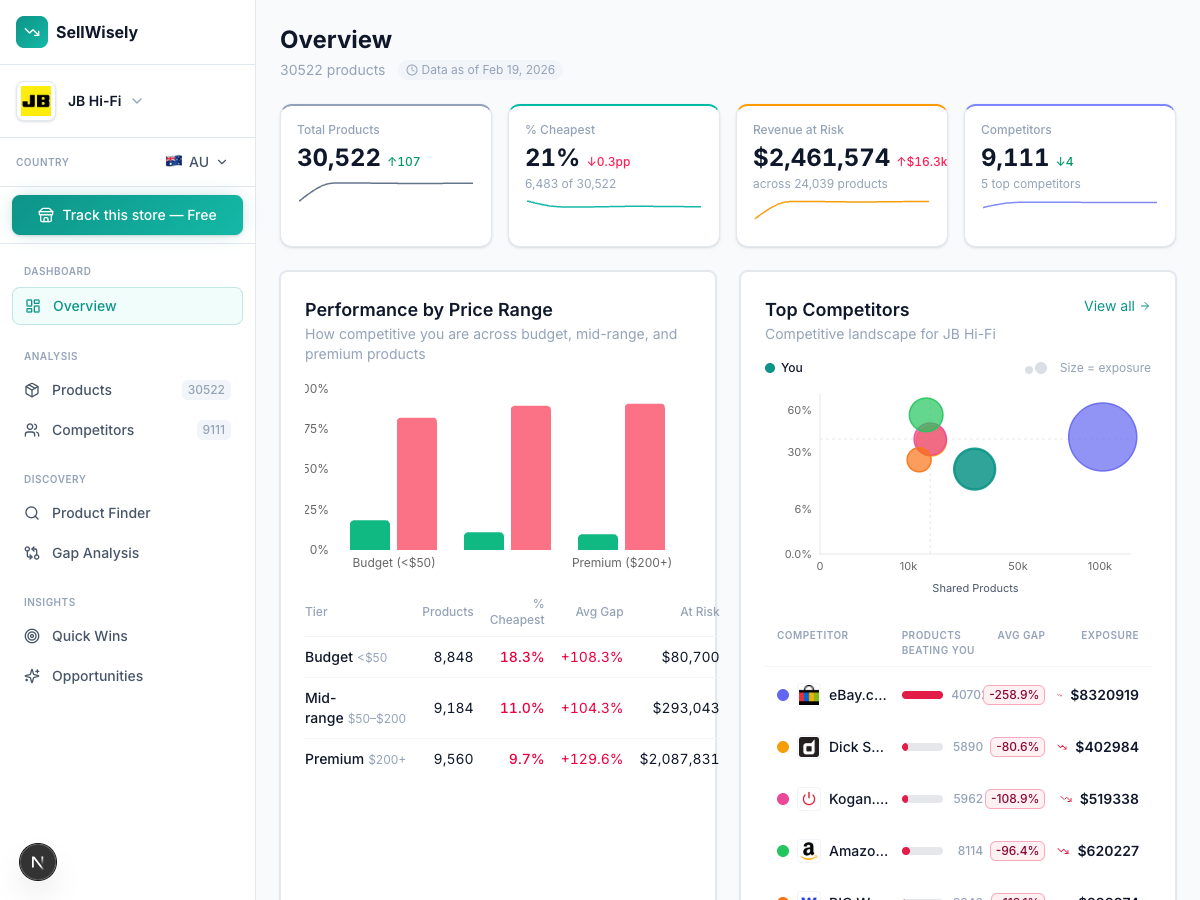

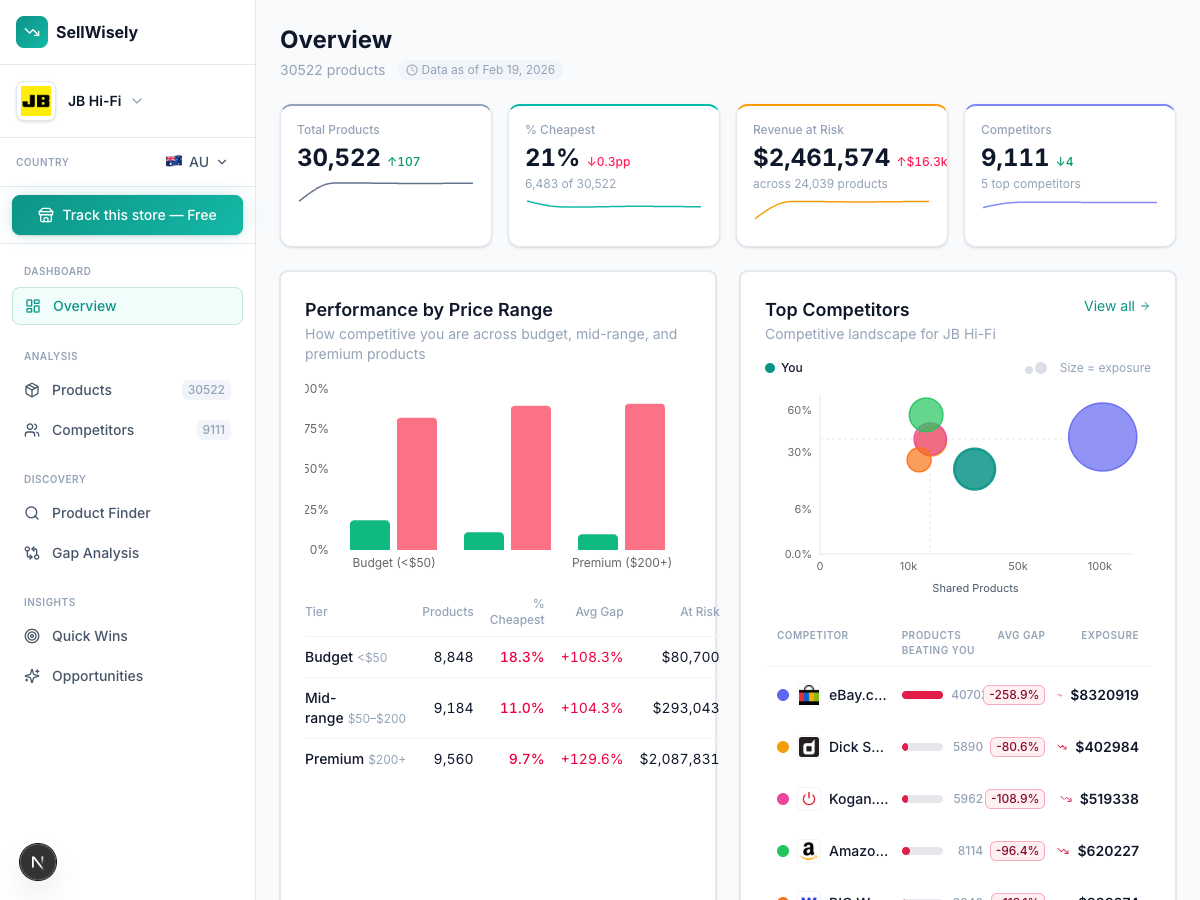

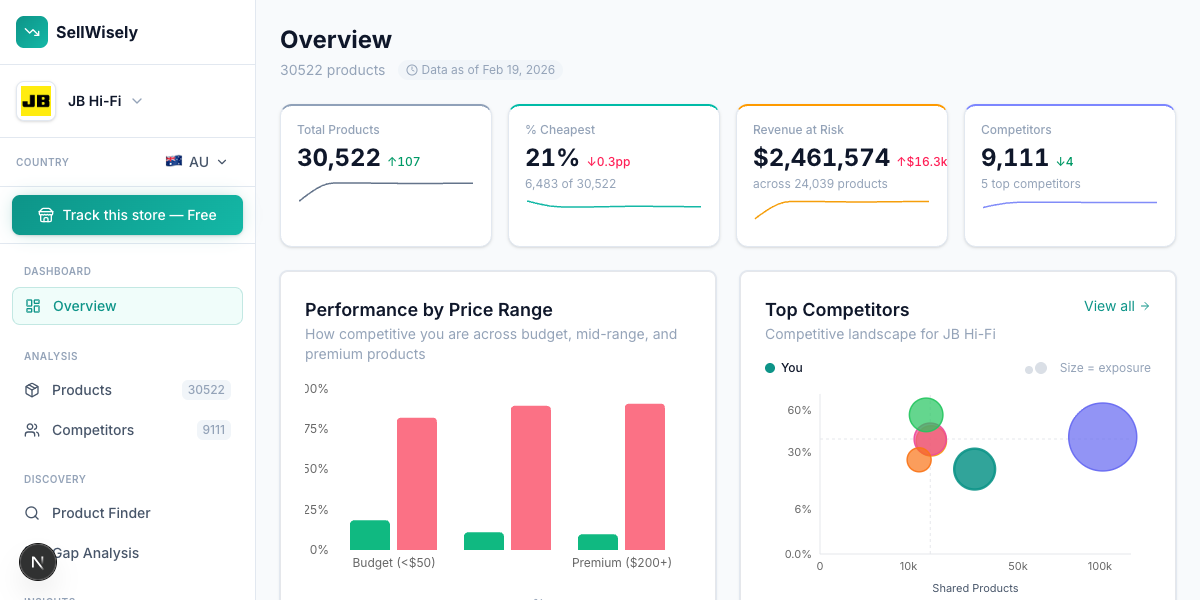

Enter your store URL and see competitor prices instantly. No signup required.

Try SellWisely Free